About me

Hi ! I am a 2nd year PhD student at the Embedded, High Performance, and Intelligent Computing (EPIC) lab, Colorado State University, Fort Collins. My advisor is Dr. Sudeep Pasricha and it has been a highly rewarding academic journey so far. Under his guidance, I am actively engaged in the development of optical accelerators for emerging AI models. I also contribute towards modelling the application-level behaviour of emerging in-memory computing paradigms, such as In-Flash Processing. Additionally, I serve as a Research Computing Analyst at CSU, helping the campus community leverage the Alpine HPC cluster for large-scale computing and AI workloads.

Research Interests

- Hardware/Software Codesign of Silicon Photonic AI Accelerators - GANs, Diffusion Models, Spiking Neural Networks

- In-Flash Processing - Modeling applications and quantifying system level metrics for novel in-flash computing paradigms

- Benchmarking AI/ML Workloads - System-level evaluation of latency, throughput, and energy efficiency on GPUs, HPC clusters, and emerging accelerators

Conference Papers

- PhotoGAN: Generative Adversarial Neural Network Acceleration with Silicon Photonics IEEE ISQED 2025

- Sustainable Acceleration of Generative AI Neural Network Models with Silicon Photonics IEEE ICCD 2025

- TCFlash: In-Flash Bulk Bitwise Processing via Dynamic Sensing and TLC Encoding in 3D NAND IEEE ICCD 2025

- Accelerating Diffusion Models for Generative AI Applications with Silicon Photonics IEEE/ACM DATE 2026

Journal Articles

Links

Education

- Ph.D in Computer Engineering, Colorado State University (2024 - Present)

- M.S in Electrical Engineering, Colorado State University (2021 - 2023)

- B.Tech in Electronics and Communication Engineering, Amrita Vishwa Vidyapeetham (2014 - 2018)

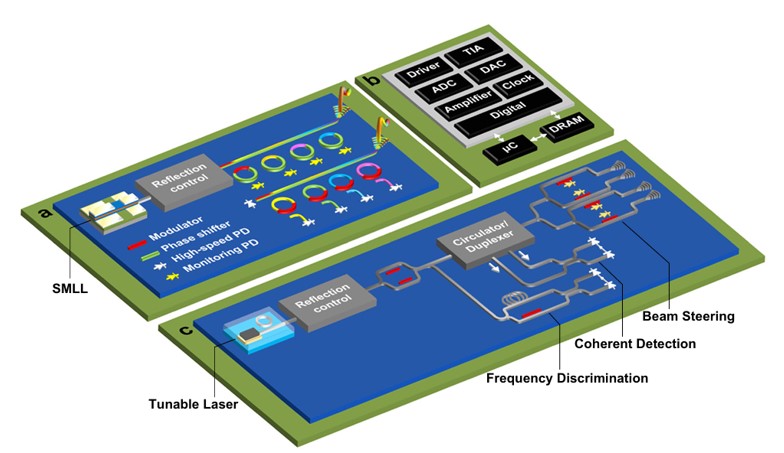

Optical Accelerators

In today's rapidly evolving AI landscape, there is a pressing need to explore emerging technologies for accelerating AI models. Silicon photonics has recently emerged as a promising alternative to traditional electronic platforms. By leveraging silicon photonics, we can design accelerators that are both faster and more energy-efficient. With this problem statement in mind, my current research focuses on the Hardware/Software Codesign of Silicon Photonic AI Accelerators. Along this research path, I have had the opportunity to explore the existing software packages for popular Generative AI models, while also delving into model compression techniques. Studying the hardware requirements (runtime, power consumption) on conventional electronic platforms, such as GPUs, has also been crucial in providing a foundation for building photonic accelerators. Throughout this journey, I have had the privilege of learning from and collaborating with my student mentor Salma Afifi, whose guidance and dedication to research continue to inspire me on a day-to-day basis.

In-Flash Processing

In-flash Processing (IFP) is an emerging paradigm that enables bulk bitwise operations to be performed directly within raw NAND flash arrays, eliminating costly data movement between memory and compute units. This approach has shown strong potential for accelerating data-intensive workloads in domains such as genome sequencing, data analytics, graph processing, and cryptography by improving both performance and energy efficiency. In collaboration with Habib Ur Rahman, my contribution towards this research is modeling applications and quantifying system-level metrics for novel IFP paradigms. This work provides deeper insights into the performance and energy trade-offs of flash-based computing, helping pave the way for more efficient storage-centric architectures.

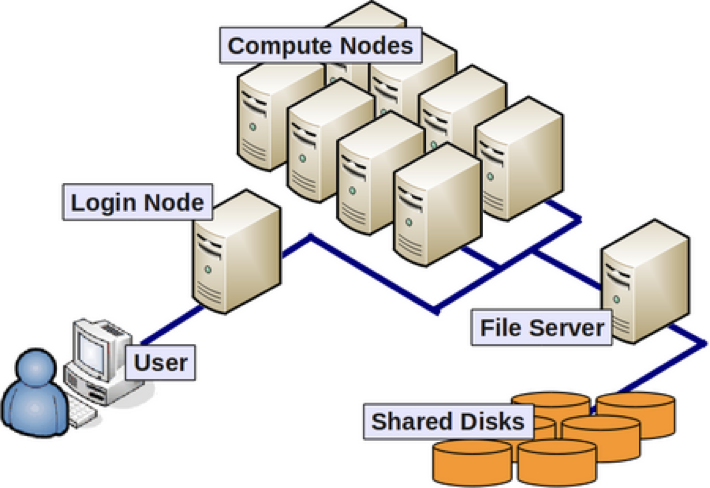

High-Performance Computing

High-performance computing (HPC) refers to the use of powerful supercomputers and parallel processing techniques to solve problems that are too large or complex for standard computers. It plays a critical role in enabling researchers and students to run large-scale simulations, analyze massive datasets, and train advanced AI models. As a Research Computing Analyst at CSU, I help the campus community effectively leverage Alpine, our heterogeneous supercomputing cluster. In this role, I guide users in understanding the Slurm workload manager, to submit and manage their jobs. Beyond technical support, I help develop supporting documentation, conduct workshops and empower students, faculty, and staff to accelerate their research through HPC.

Invited Talks

I was invited to speak at the AICTE-sponsored Six-Day Faculty Development Program (online) on "Next-Gen VLSI and Semiconductor Systems: Integrating Machine Learning for Intelligent Design Automation," hosted by R.M.D. Engineering College, Tamil Nadu, India. In my lecture, titled “Sustainable Acceleration of Generative AI Models Using Silicon Photonics,” I presented an overview of my current research and its implications for sustainable AI acceleration. The session was followed by an engaging Q&A, which was a valuable opportunity to interact with undergrad students, fellow researchers and faculty members.